Creating a ROSA cluster with PrivateLink enabled (custom VPC) and STS

This content is authored by Red Hat experts, but has not yet been tested on every supported configuration.

This is a combination of the private-link and sts setup documents to show the full picture

Prerequisites

AWS Preparation

If this is a brand new AWS account that has never had a AWS Load Balancer installed in it, you should run the following

aws iam create-service-linked-role --aws-service-name \ "elasticloadbalancing.amazonaws.com"

Create the AWS Virtual Private Cloud (VPC) and Subnets

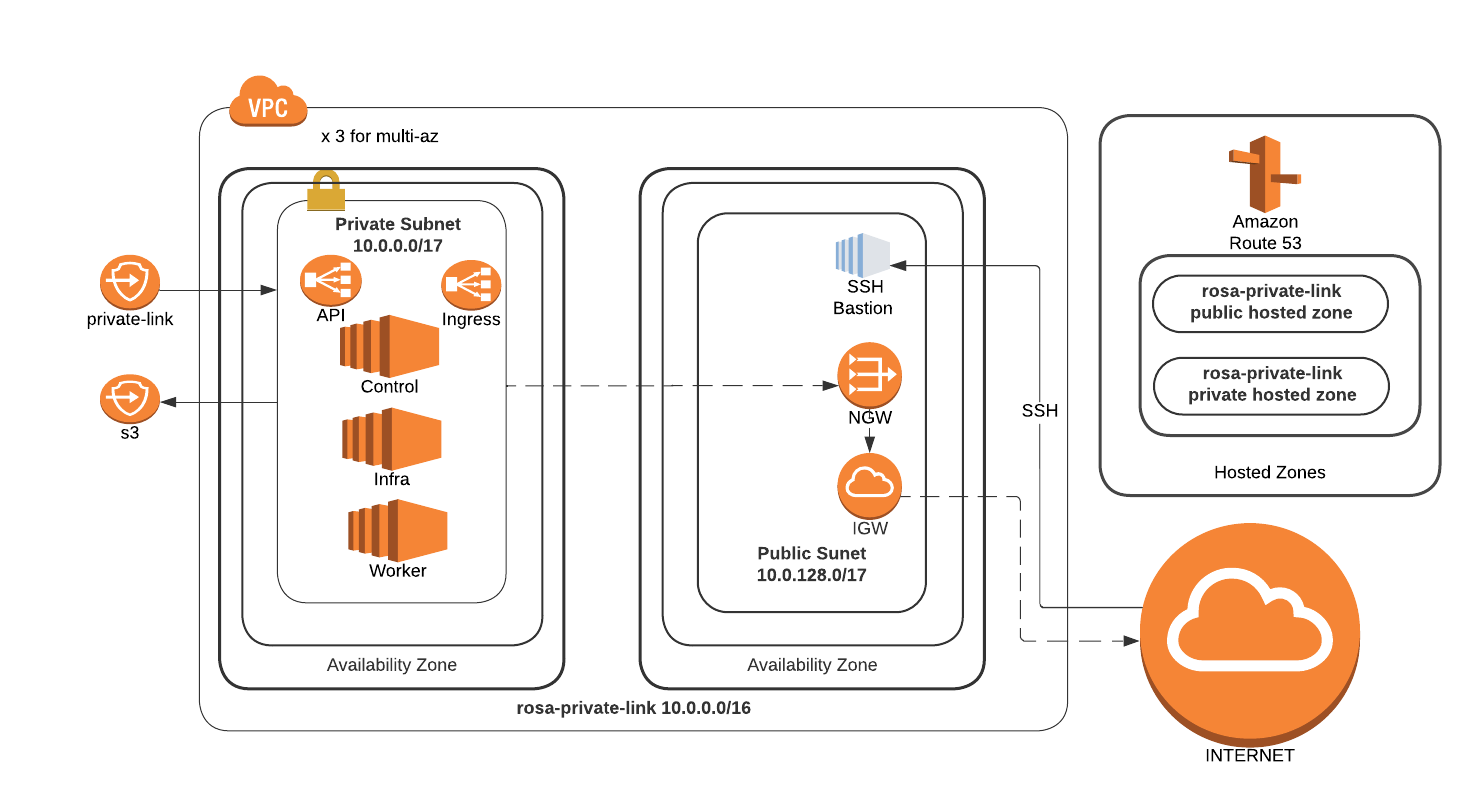

For this scenario, we will be using a newly created VPC with both public and private subnets. All of the cluster resources will reside in the private subnet. The public subnet will be used for traffic to the Internet (egress)

Note: If you already have a Transit Gateway (TGW) or similar, you can skip the public subnet configuration

Note: When creating subnets, make sure that subnet(s) are created in availability zones that have ROSA instances types available. If AZ is not “forced”, the subnet is created in a random AZ in the region. Force AZ using the

--availability-zoneargument in thecreate-subnetcommand.

Use

rosa list instance-typesto list the ROSA instance typesUse

aws ec2 describe-instance-type-offeringsto check that your desired AZ supports your desired instance typeExample using us-east-1, us-east-1b, and m5.xlarge:

aws ec2 describe-instance-type-offerings --location-type availability-zone \ --filters Name=location,Values=us-east-1b --region us-east-1 \ --output text | egrep m5.xlargeResult should display INSTANCETYPEOFFERINGS [instance-type] [az] availability-zone if your selected region supports your desired instance type

Configure the following environment variables, adjusting for

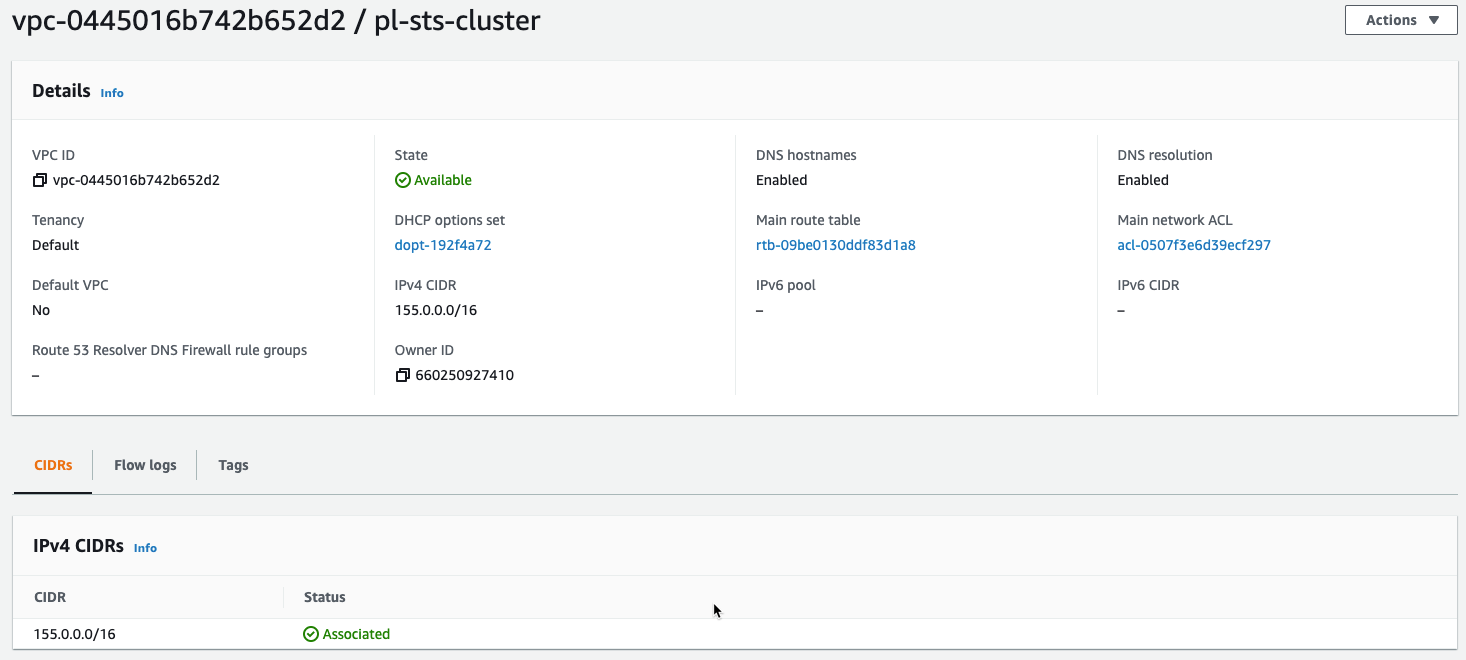

ROSA_CLUSTER_NAME,VERSIONandREGIONas necessaryexport VERSION=4.9.15 \ ROSA_CLUSTER_NAME=pl-sts-cluster \ AWS_ACCOUNT_ID=`aws sts get-caller-identity --query Account --output text` \ REGION=us-east-1 \ AWS_PAGER=""Create a VPC for use by ROSA

Create the VPC and return the ID as

VPC_IDVPC_ID=`aws ec2 create-vpc --cidr-block 10.0.0.0/16 | jq -r .Vpc.VpcId` echo $VPC_IDTag the newly created VPC with the cluster name

aws ec2 create-tags --resources $VPC_ID \ --tags Key=Name,Value=$ROSA_CLUSTER_NAMEConfigure the VPC to allow DNS hostnames for their public IP addresses

aws ec2 modify-vpc-attribute --vpc-id $VPC_ID --enable-dns-hostnamesThe new VPC should be visible in the AWS console

Create a Public Subnet to allow egress traffic to the Internet

Create the public subnet in the VPC CIDR block range and return the ID as

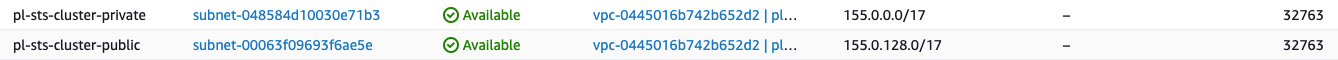

PUBLIC_SUBNETPUBLIC_SUBNET=`aws ec2 create-subnet --vpc-id $VPC_ID --cidr-block 10.0.128.0/17 | jq -r .Subnet.SubnetId` echo $PUBLIC_SUBNETTag the public subnet with the cluster name

aws ec2 create-tags --resources $PUBLIC_SUBNET \ --tags Key=Name,Value=$ROSA_CLUSTER_NAME-public

Create a Private Subnet for the cluster

Create the private subnet in the VPC CIDR block range and return the ID as

PRIVATE_SUBNETPRIVATE_SUBNET=`aws ec2 create-subnet --vpc-id $VPC_ID \ --cidr-block 10.0.0.0/17 | jq -r .Subnet.SubnetId` echo $PRIVATE_SUBNETTag the private subnet with the cluster name

aws ec2 create-tags --resources $PRIVATE_SUBNET \ --tags Key=Name,Value=$ROSA_CLUSTER_NAME-privateBoth subnets should now be visible in the AWS console

Create an Internet Gateway for NAT egress traffic

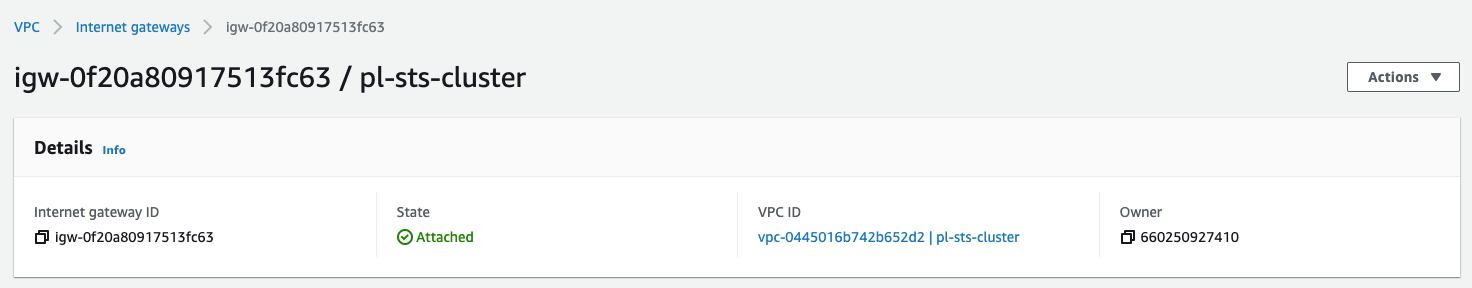

Create the Internet Gateway and return the ID as

I_GWI_GW=`aws ec2 create-internet-gateway | jq -r .InternetGateway.InternetGatewayId` echo $I_GWAttach the new Internet Gateway to the VPC

aws ec2 attach-internet-gateway --vpc-id $VPC_ID --internet-gateway-id $I_GWTag the Internet Gateway with the cluster name

aws ec2 create-tags --resources $I_GW \ --tags Key=Name,Value=$ROSA_CLUSTER_NAMEThe new Internet Gateway should be created and attached to your VPC

Create a Route Table for NAT egress traffic

Create the Route Table and return the ID as

R_TABLER_TABLE=`aws ec2 create-route-table --vpc-id $VPC_ID \ | jq -r .RouteTable.RouteTableId` echo $R_TABLECreate a route with no IP limitations (0.0.0.0/0) to the Internet Gateway

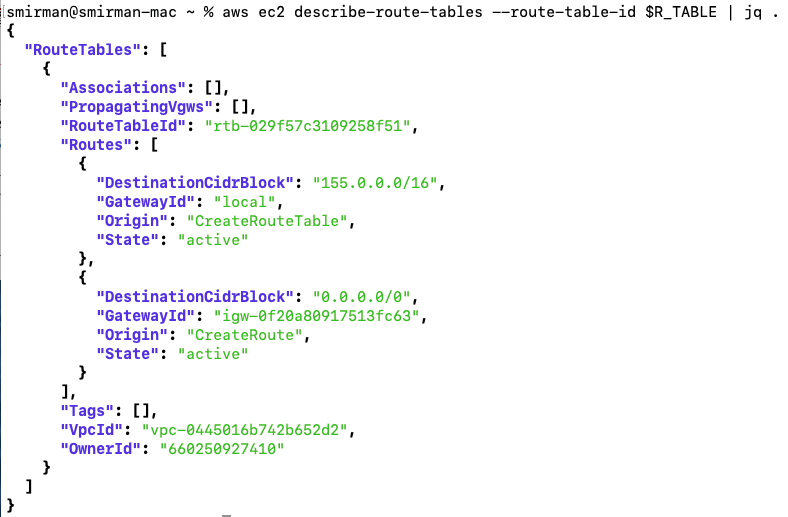

aws ec2 create-route --route-table-id $R_TABLE \ --destination-cidr-block 0.0.0.0/0 --gateway-id $I_GWVerify the route table settings

aws ec2 describe-route-tables --route-table-id $R_TABLEExample output

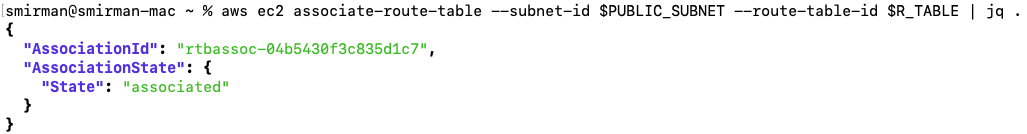

Associate the Route Table with the Public subnet

aws ec2 associate-route-table --subnet-id $PUBLIC_SUBNET \ --route-table-id $R_TABLEExample output

Tag the Route Table with the cluster name

aws ec2 create-tags --resources $R_TABLE \ --tags Key=Name,Value=$ROSA_CLUSTER_NAME

Create a NAT Gateway for the Private network

Allocate and elastic IP address and return the ID as

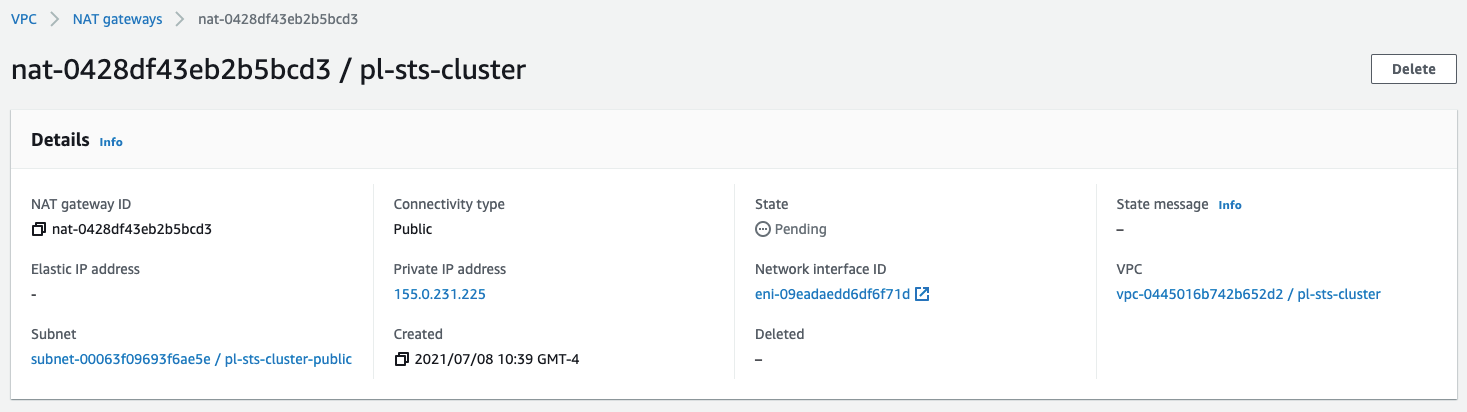

EIPEIP=`aws ec2 allocate-address --domain vpc | jq -r .AllocationId` echo $EIPCreate a new NAT Gateway in the Public subnet with the new Elastic IP address and return the ID as

NAT_GWNAT_GW=`aws ec2 create-nat-gateway --subnet-id $PUBLIC_SUBNET \ --allocation-id $EIP | jq -r .NatGateway.NatGatewayId` echo $NAT_GWTag the Elastic IP with the cluster name

aws ec2 create-tags --resources $EIP --resources $NAT_GW \ --tags Key=Name,Value=$ROSA_CLUSTER_NAMEThe new NAT Gateway should be created and associated with your VPC

Create a Route Table for the Private subnet to the NAT Gateway

Create a Route Table in the VPC and return the ID as

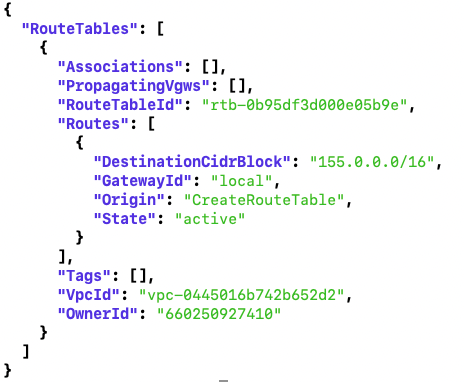

R_TABLE_NATR_TABLE_NAT=`aws ec2 create-route-table --vpc-id $VPC_ID \ | jq -r .RouteTable.RouteTableId` echo $R_TABLE_NATLoop through a Route Table check until it is created

while ! aws ec2 describe-route-tables \ --route-table-id $R_TABLE_NAT \ | jq .; do sleep 1; doneExample output!

Create a route in the new Route Table for all addresses to the NAT Gateway

aws ec2 create-route --route-table-id $R_TABLE_NAT \ --destination-cidr-block 0.0.0.0/0 \ --gateway-id $NAT_GWAssociate the Route Table with the Private subnet

aws ec2 associate-route-table --subnet-id $PRIVATE_SUBNET \ --route-table-id $R_TABLE_NATTag the Route Table with the cluster name

aws ec2 create-tags --resources $R_TABLE_NAT $EIP \ --tags Key=Name,Value=$ROSA_CLUSTER_NAME-private

Configure the AWS Security Token Service (STS) for use with ROSA

The AWS Security Token Service (STS) allows us to deploy ROSA without needing a ROSA admin account, instead it uses roles and policies to gain access to the AWS resources needed to install and operate the cluster.

This is a summary of the official OpenShift docs that can be used as a line by line install guide.

Note that some commands (OIDC for STS) will be hard coded to US-EAST-1, do not be tempted to change these to use $region instead or you will fail installation.

Make you your ROSA CLI version is correct (v1.1.0 or higher)

rosa versionCreate the IAM Account Roles

rosa create account-roles --mode auto --yes

Deploy ROSA cluster

Run the rosa cli to create your cluster

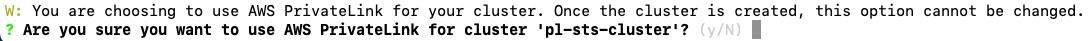

rosa create cluster -y --cluster-name ${ROSA_CLUSTER_NAME} \ --region ${REGION} --version ${VERSION} \ --subnet-ids=$PRIVATE_SUBNET \ --private-link --machine-cidr=10.0.0.0/16 \ --stsConfirm the PrivateLink set up

Create the Operator Roles

rosa create operator-roles -c $ROSA_CLUSTER_NAME --mode auto --yesCreate the OIDC provider.

rosa create oidc-provider -c $ROSA_CLUSTER_NAME --mode auto --yesValidate The cluster is now installing

The State should have moved beyond

pendingand showinstallingorready.watch "rosa describe cluster -c $ROSA_CLUSTER_NAME"Watch the install logs

rosa logs install -c $ROSA_CLUSTER_NAME --watch --tail 10

Validate the cluster

Once the cluster has finished installing it is time to validate. Validation when using PrivateLink requires the use of a jump host.

You can create them using the AWS Console or the AWS CLI as depicted below:

Option 1: Create a jump host instance through the AWS Console

Navigate to the EC2 console and launch a new instance

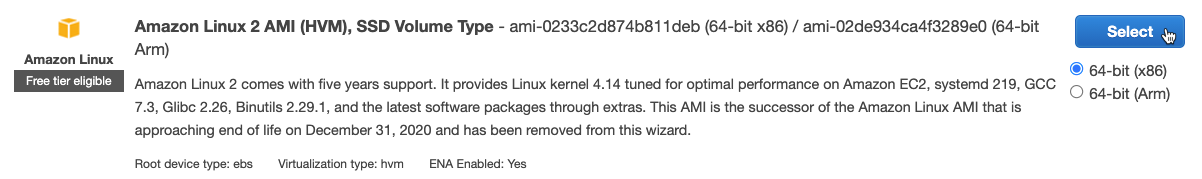

Select the AMI for your instance, if you don’t have a standard, the Amazon Linux 2 AMI works just fine

Choose your instance type, the t2.micro/free tier is sufficient for our needs, and click Next: Configure Instance Details

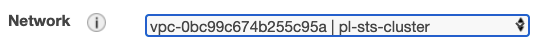

Change the Network settings to setup this host inside your private-link VPC

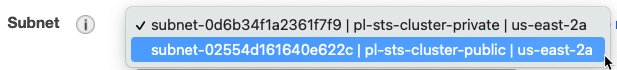

Change the Subnet setting to use the private-link-public subnet

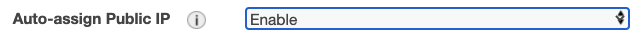

Change Auto-assign Public IP to Enable

Default settings for Storage and Tags are fine. Make the following changes in the 6. Configure Security Group tab (either by clicking through the screens or selecting from the top bar)

If you already have a security group created to allow access from your computer to AWS, choose Select an existing security group and choose that group from the list, otherwise, select Create a new security group and continue.

To allow access only from your current public IP, change the Source heading to use My IP

Click Review and Launch, verify all settings are correct, and follow the standard AWS instructions for finalizing the setup and selecting/creating the security keys.

Once launched, open the instance summary for the jump host instance and note the public IP address.

Option 2: Create a jumphost instance using the AWS CLI

Create an additional Security Group for the jumphost

TAG_SG="$ROSA_CLUSTER_NAME-jumphost-sg" aws ec2 create-security-group --group-name ${ROSA_CLUSTER_NAME}-jumphost-sg --description ${ROSA_CLUSTER_NAME}-jumphost-sg --vpc-id ${VPC_ID} --tag-specifications "ResourceType=security-group,Tags=[{Key=Name,Value=$TAG_SG}]"Grab the Security Group Id generated in the previous step

PublicSecurityGroupId=$(aws ec2 describe-security-groups --filters "Name=tag:Name,Values=${ROSA_CLUSTER_NAME}-jumphost-sg" | jq -r '.SecurityGroups[0].GroupId') echo $PublicSecurityGroupIdAdd a rule to Allow the ssh into the Public Security Group

aws ec2 authorize-security-group-ingress --group-id $PublicSecurityGroupId --protocol tcp --port 22 --cidr 0.0.0.0/0(Optional) Create a Key Pair for your jumphost if your have not a previous one

aws ec2 create-key-pair --key-name $ROSA_CLUSTER_NAME-key --query 'KeyMaterial' --output text > PATH/TO/YOUR_KEY.pem chmod 400 PATH/TO/YOUR_KEY.pemDefine an AMI_ID to be used for your jump host

AMI_ID="ami-0022f774911c1d690"This AMI_ID corresponds an Amazon Linux within the us-east-1 region and could be not available in your region. Find your AMI ID and use the proper ID.

Launch an ec2 instance for your jumphost using the parameters defined in early steps:

TAG_VM="$ROSA_CLUSTER_NAME-jumphost-vm" aws ec2 run-instances --image-id $AMI_ID --count 1 --instance-type t2.micro --key-name $ROSA_CLUSTER_NAME-key --security-group-ids $PublicSecurityGroupId --subnet-id $PUBLIC_SUBNET --associate-public-ip-address --tag-specifications "ResourceType=instance,Tags=[{Key=Name,Value=$TAG_VM}]"This instance will be associated with a Public IP directly.

Wait until the ec2 instance is in Running state, grab the Public IP associated to the instance and check the if the ssh port and:

IpPublicBastion=$(aws ec2 describe-instances --filters "Name=tag:Name,Values=$TAG_VM" | jq -r '.Reservations[0].Instances[0].PublicIpAddress') echo $IpPublicBastion nc -vz $IpPublicBastion 22

Create a ROSA admin user and save the login command for use later

rosa create admin -c $ROSA_CLUSTER_NAMENote the DNS name of your private cluster, use the

rosa describecommand if neededrosa describe cluster -c $ROSA_CLUSTER_NAMEupdate /etc/hosts to point the openshift domains to localhost. Use the DNS of your openshift cluster as described in the previous step in place of

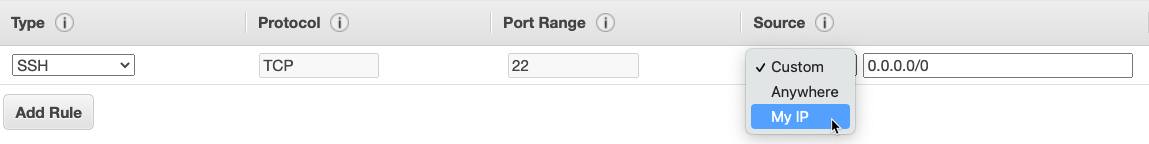

$YOUR_OPENSHIFT_DNSbelow127.0.0.1 api.$YOUR_OPENSHIFT_DNS 127.0.0.1 console-openshift-console.apps.$YOUR_OPENSHIFT_DNS 127.0.0.1 oauth-openshift.apps.$YOUR_OPENSHIFT_DNSSSH to that instance, tunneling traffic for the appropriate hostnames. Be sure to use your new/existing private key, the OpenShift DNS for

$YOUR_OPENSHIFT_DNSand your jump host IP for$YOUR_EC2_IPsudo ssh -i PATH/TO/YOUR_KEY.pem \ -L 6443:api.$YOUR_OPENSHIFT_DNS:6443 \ -L 443:console-openshift-console.apps.$YOUR_OPENSHIFT_DNS:443 \ -L 80:console-openshift-console.apps.$YOUR_OPENSHIFT_DNS:80 \ ec2-user@$YOUR_EC2_IP

From your EC2 jump instances, download the OC CLI and install it locally

- Download the OC CLI for Linux

wget https://mirror.openshift.com/pub/openshift-v4/clients/ocp/stable/openshift-client-linux.tar.gz - Unzip and untar the binary

gunzip openshift-client-linux.tar.gz tar -xvf openshift-client-linux.tar

- Download the OC CLI for Linux

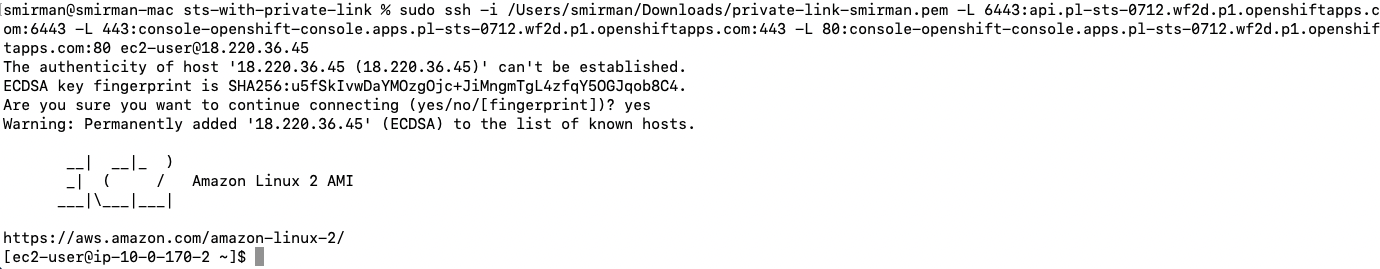

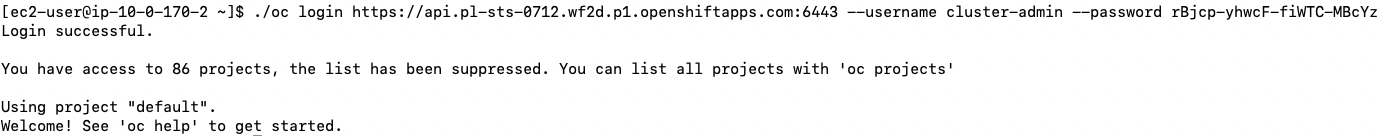

log into the cluster using oc login command from the create admin command above. ex.

./oc login https://api.$YOUR_OPENSHIFT_DNS.p1.openshiftapps.com:6443 --username cluster-admin --password $YOUR_OPENSHIFT_PWD

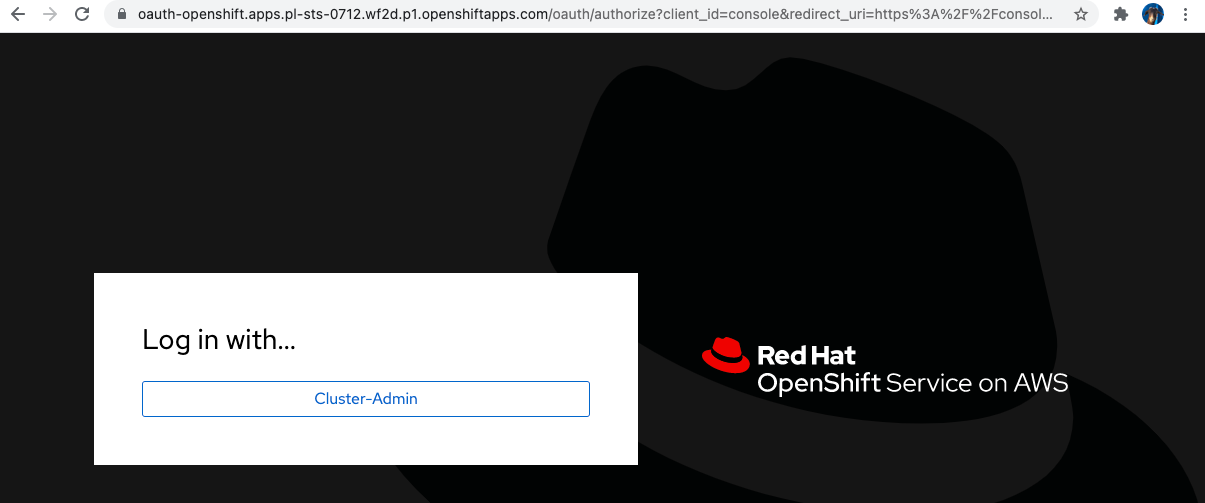

Check that you can access the Console by opening the console url in your browser.

Cleanup

Delete ROSA

rosa delete cluster -c $ROSA_CLUSTER_NAME -yWatch the logs and wait until the cluster is deleted

rosa logs uninstall -c $ROSA_CLUSTER_NAME --watchClean up the STS roles

Note you can get the correct commands with the ID filled in from the output of the previous step.

rosa delete operator-roles -c <id> --mode auto --yes rosa delete oidc-provider -c <id> --mode auto --yesDelete AWS resources

aws ec2 delete-nat-gateway --nat-gateway-id $NAT_GW | jq . aws ec2 release-address --allocation-id=$EIP | jq . aws ec2 detach-internet-gateway --vpc-id $VPC_ID --internet-gateway-id $I_GW | jq . aws ec2 delete-subnet --subnet-id=$PRIVATE_SUBNET | jq . aws ec2 delete-subnet --subnet-id=$PUBLIC_SUBNET | jq . aws ec2 delete-route-table --route-table-id=$R_TABLE | jq . aws ec2 delete-route-table --route-table-id=$R_TABLE_NAT | jq . aws ec2 delete-internet-gateway --internet-gateway-id $I_GW | jq . aws ec2 delete-vpc --vpc-id=$VPC_ID | jq .